Disclosure: I may earn affiliate revenue or commissions if you purchase products from links on my website. The prospect of compensation does not influence what I write about or how my posts are structured. The vast majority of articles on my website do not contain any affiliate links.

“Did you write unit tests?”

Well, uh, not exactly, because, you see…

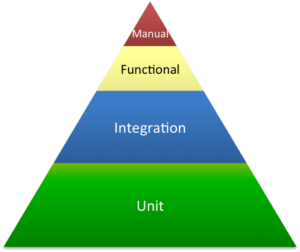

When I developed my first microservice, I was faced with this question. I struggled to explain why I hadn’t written unit tests. It’s a common programming adage–pragmatic application of the testing pyramid is the best way to increase development velocity while ensuring integrity of features. But, for my first microservice, I had trouble defining what the base of the pyramid–a unit test–actually was.

I came out of school thinking that a unit test was supposed to be run against an isolated piece of code testing a single piece of logic. My idea was right, but I hit a wall when I realized that writing unit tests and integration tests for my microservice would have been highly redundant (and not the good kind of redundant). Pedantism would have resulted in a lot of wasted effort. In fact, it would have taken me longer to write the unit tests than it did to write the entire service. So I simply threw my hands up and, on the spot, stuttered out a weak justification. Was my approach correct?

Let’s take a look at the Swagger Petstore. This is a simple RESTful API with an interface defined in Swagger (OpenAPI) YAML. The brilliance of the OpenAPI spec is that it is language agnostic and facilitates generation of both client and server code, though that’s beside the point.

Digging through Swagger Petstore is a great way to learn about and experiment with microservices using the language of your choice. I use Python with Connexion, and, over the years, I have heavily used and modified this bare bones implementation.

Let’s imagine that instead of operating on data in memory, the service is connected to a sqlite database. This is the basis, if not the extent, of production microservices that I have actually built (though not for pet stores). The service gets built then it’s time to write tests. Here’s the testing pyramid one might reference:

At a basic level, my definition of unit tests from above is accurate. At the next stage, integration tests involve multiple components working in unison, such as the server code and database. The problem with simple microservices and what led to trouble when it came time to deploy my first one, is that it’s not rare for 100% of methods to be CRUD operations. To overly simplify the core functionality of microservices I have built, all they really do is wrap database queries!

The problem this presents is that a well-written suite of unit tests is similar to a well-written suite of integration tests. The unit tests have the database mocked while the integration tests set up and tear down a real database. Aside from that, the only other difference is that unit tests are commonly white box while integration tests–when I write them–are black box.

Here’s what my get and put methods look like to start:

def get_pets(animal_type=None):

conn, cur = connect()

query = 'SELECT * from pets'

params = []

if animal_type:

query += ' where animal_type=?'

cur.execute(query, params)

results = [{'id': x[0], 'name': x[1], 'animal_type': x[2], 'created': x[3]} for x in cur.fetchall()]

disconnect(conn)

return results, 200

# Originally for creating and updating, now just for creating

def put_pet(pet_id, pet):

conn, cur = connect()

pet_name = pet.get('name', '')

animal_type = pet.get('animal_type', '')

created = str(datetime.now())

cur.execute('insert into pets VALUES (?,?,?,?)', (pet_id, pet_name, animal_type, created))

conn.commit()

disconnect(conn)

return NoContent, 201

Here's what my code looks like with mocked unit tests:

import unittest

import mock

from app import connect, disconnect, get_pets, put_pet, NoContent

class mock_connection:

def commit(self):

return True

class mock_cursor:

def fetchall(self):

return []

def execute(self, query, params):

return True

def mock_connect():

return mock_connection(), mock_cursor()

def mock_disconnect(conn):

return True

class TestMicroservice(unittest.TestCase):

'''Tests for app.py'''

@mock.patch('app.connect', side_effect=mock_connect)

@mock.patch('app.disconnect', side_effect=mock_disconnect)

def test_get(self, new_connect, new_disconnect):

'''Simple Get for empty db'''

pets = get_pets()

print("pets: " + str(pets))

self.assertTrue(pets == ([], 200))

@mock.patch('app.connect', side_effect=mock_connect)

@mock.patch('app.disconnect', side_effect=mock_disconnect)

def test_put(self, new_connect, new_disconnect):

'''Simple put'''

pet_created = put_pet("1", {"name": "Bobo", "animal_type": "buffoon"})

self.assertTrue(pet_created == (NoContent, 201))

if __name__ == '__main__':

unittest.main()

Here's what an integration test might look like*:

#!/bin/bash

URL=:8080

set -x

## Test 1

# Set up

rm -f ./int_test.db

pid=$(./app.py int_test.db >/dev/null 2>&1 & echo $!)

# Test

sleep 3

if [ $(http -ph GET :8080/pets | grep -c "200 OK") -ne 1 ]

then

echo "FAIL"

else

echo "PASS"

fi

if [ $(http -pb GET :8080/pets | grep -c "\[\]") -ne 1 ]

then

echo "FAIL"

else

echo "PASS"

fi

# Tear down

kill -9 $pid

rm -f ./int_test.db

## End Test 1

## Test 2

# Set up

rm -f ./int_test.db

pid=$(./app.py int_test.db >/dev/null 2>&1 & echo $!)

# Test

sleep 3

if [ $(http -ph PUT :8080/pets/1 name=bobo animal_type=buffoon | grep -c "201 CREATED") -ne 1 ]

then

echo "FAIL"

else

echo "PASS"

fi

if [ $(http -ph GET :8080/pets | grep -c "200 OK") -ne 1 ]

then

echo "FAIL"

else

echo "PASS"

fi

# Purposefully left incomplete

if [ $(http -pb GET :8080/pets | grep -c "\"name\": \"bobo\"") -ne 1 ]

then

echo "FAIL"

else

echo "PASS"

fi

# Tear down

kill -9 $pid

rm -f ./int_test.db

## End Test 2

* This is the ugliest integration testing suite I have ever written. It is rife with bad practices that I will break down in a future article. Though written in bash, the similarities between what the integration tests are doing and what the unit tests are doing are evident.

Especially for simple microservices, unit tests look a hell of a lot like integration tests. In the same vein, the integration tests and functional tests can be the same thing. Manual tests, which I do carry out in situations where test coverage isn't great, involve me submitting queries using the Swagger UI or using Postman.

In practice, I only write unit tests for code that can be decomposed from an integration test. Meaning that I write unit tests mainly for internal helper functions that don't interact with external dependencies. The speed difference between integration tests and unit tests (spinning up a DB, populating the DB, sending an HTTP request, waiting for an HTTP response vs everything being mocked out and snappy) is negligible for lightweight services. Also, black box testing, at a higher level of abstraction, is going to be more isolated from minor code changes.

To confidently answer the question that I stumbled through many months ago--for a service like the one above, I would write zero unit tests and have the bulk of the testing occur in a suite of integration tests (though preferably ones not written in bash). It is for this reason that I don't feel the testing pyramid jives with the service oriented architecture style. In my head, the shape of the pyramid is much more like a diamond, or heptagon, or inverted pyramid, depending on the existence and complexity of the UI.

In closing, some microservices do not require unit tests, and, generally speaking, there exists a wide swath of use cases for which unit testing should take a backseat to integration tests. When it comes to writing and implementing the integration tests, there are many exciting technologies to use, and I look forward to discussing these in more detail in a future post.